Technical SEO for engineers is the discipline of configuring crawl access, indexing signals, rendering, performance, structured data, and site architecture so that search engines can efficiently discover, understand, and rank a manufacturing website's product, process, material, and certification pages. We treat technical SEO as a code-and-infrastructure layer that translates engineering decisions into procurement-intent visibility.

This guide covers the core definition of technical SEO for engineering teams, the crawl and indexing fundamentals that govern catalog discovery, site architecture patterns for industrial product taxonomies, page experience and Core Web Vitals tuning, structured data and schema implementation, rendering and hosting tradeoffs, and audit and monitoring workflows.

The first set of sections defines technical SEO for engineers, separates it from on-page and off-page work, and assigns ownership of the stack to the engineering team that ships the site.

The middle sections walk through how Googlebot crawls large catalogs, how robots.txt and XML sitemaps shape the index, how canonical tags resolve duplication, and how URL hierarchies, internal linking, and faceted navigation determine which pages compound topical authority.

The page experience and structured data sections explain Largest Contentful Paint, Interaction to Next Paint, Cumulative Layout Shift, Time to First Byte, and the schema types that ground product, organization, and breadcrumb entities.

The closing sections compare server-side, client-side, and hybrid rendering for catalog frameworks, then outline the audit tools, log file workflows, and stakeholder dashboards that keep technical SEO health visible to procurement and marketing teams.

What Does Technical SEO Mean for Engineers Working on Manufacturing Websites?

Technical SEO for engineers working on manufacturing websites means owning the crawl, indexing, rendering, performance, and structured data layers that determine whether a search engine can discover and understand product, process, and material pages. Sub-sections below break down its boundaries, ownership, disciplines, and crawler interpretation.

How Does Technical SEO Differ From On-Page and Off-Page SEO?

Technical SEO differs from on-page and off-page SEO by operating at the infrastructure layer rather than the content or link layer. On-page SEO governs the words, headings, and intent inside a page. Off-page SEO governs external authority signals such as backlinks. Technical SEO governs how the site is crawled, rendered, and parsed before content is even read. Engineers handle server response codes, canonical resolution, sitemap generation, and JavaScript hydration. Marketing teams cannot fix these layers without engineering access. The three disciplines reinforce each other, but technical SEO is the foundation that lets the other two count, much like the basics of seo friendly content only compound once the technical foundation exists.

Why Should Engineers Own the Technical SEO Layer of a Manufacturing Site?

Engineers should own the technical SEO layer of a manufacturing site because every relevant control surface lives in the codebase, the build pipeline, the CDN configuration, and the hosting stack. Robots directives, canonical tags, hreflang, structured data, and rendering modes ship through engineering workflows, not content management interfaces. A marketing team can request changes, but only the engineering team can validate them across staging, preview, and production. Pushing technical SEO into the engineering backlog is the same logic that drives what is industrial seo ownership decisions: technical decisions need technical owners.

What Core Disciplines Make Up the Technical SEO Stack for Industrial Sites?

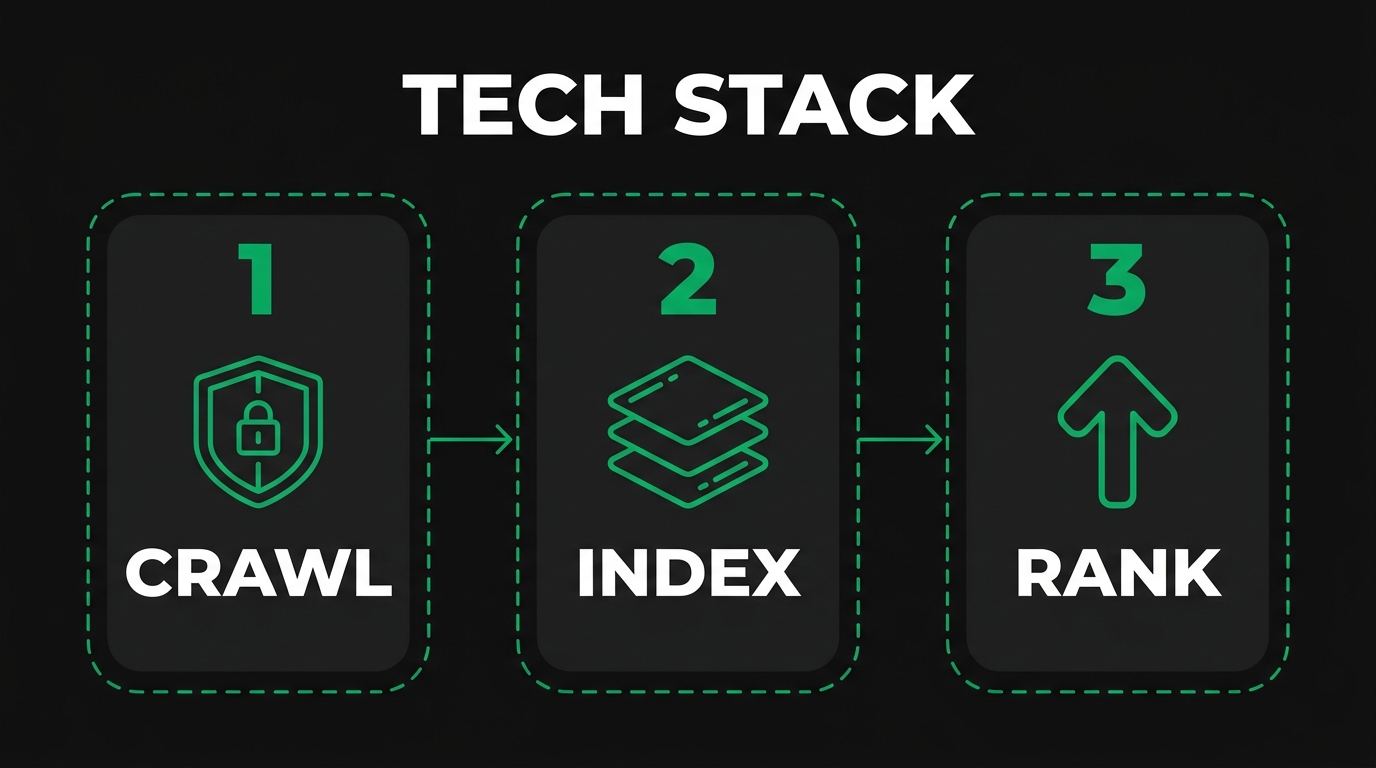

The core disciplines of the technical SEO stack for industrial sites are crawl management, indexation control, site architecture, rendering strategy, page experience optimization, structured data implementation, internationalization, and observability. Each discipline maps to a measurable signal: crawl management to log file activity, indexation to index coverage reports, architecture to internal link graphs, rendering to JavaScript execution, performance to Core Web Vitals, schema to rich result eligibility, internationalization to hreflang clusters, and observability to dashboards. Industrial catalogs add product specifications, certifications, and CAD files to every discipline.

How Do Search Engine Crawlers Interpret a Technical SEO Implementation?

Search engine crawlers interpret a technical SEO implementation by reading robots.txt, fetching URLs from sitemaps and discovered links, executing rendering, and storing canonical signals against an index. Bing Webmaster Tools helps webmasters control website visibility in Bing's search results, with the main goal of providing information about how Bing's search engine crawls and indexes their websites; submitting XML sitemaps improves Bing's ability to find and index pages, according to Bing Webmaster Tools Help Documentation. Crawlers do not infer intent from a CMS; they parse what the server returns. Clean technical SEO produces clean signals, which is a prerequisite to deserving rankings under any helpful-content policy. The next section moves from interpretation to crawl mechanics for large manufacturing catalogs.

What Are the Crawl and Indexing Fundamentals Engineers Must Understand?

The crawl and indexing fundamentals engineers must understand are how Googlebot reaches a catalog, how crawl budget is allocated, how robots.txt scopes access, how XML sitemaps publish discovery hints, and how canonical tags consolidate duplicate URLs. Each fundamental ties to a concrete file, header, or response code in the engineering stack.

How Does Googlebot Crawl a Large Manufacturing Catalog?

Googlebot crawls a large manufacturing catalog by issuing parallel HTTP requests against discovered URLs while respecting host-level rate limits. According to Google Search Central, large sites with 1 million+ unique pages and content that changes moderately often require crawl budget optimization, and a site's crawl budget is determined by two main elements: crawl capacity limit and crawl demand. Manufacturing catalogs with deep process, material, and certification combinations push past these thresholds quickly. Engineers should treat crawl as a finite resource that responds to server speed, content quality, and link structure.

What Is a Crawl Budget and How Does It Affect Industrial Catalogs?

A crawl budget is the number of URLs Googlebot is willing and able to fetch from a site within a given window. It affects industrial catalogs because process-by-material-by-tolerance pages multiply faster than crawl capacity. When budget is wasted on duplicate filter URLs, faceted variations, or expired part numbers, the canonical product pages risk slow re-indexing. Crawl budget is not a published number; it is observed in Search Console crawl stats and server logs. The engineering goal is to spend budget on revenue-bearing pages: capability pages, hero process pages, and high-margin product families.

How Should Engineers Configure Robots.txt for Industrial Sites?

Engineers should configure robots.txt for industrial sites by allowing crawl of all production catalog paths, disallowing infinite-space query parameters, blocking staging hostnames at the DNS level rather than via robots, and keeping the file small. Google enforces a robots.txt file size limit of 500 kibibytes (KiB), and content after the maximum file size is ignored, per Google Search Central. Use Disallow surgically: blocking a path also prevents Google from seeing canonicals or noindex directives inside it. Pair robots.txt with meta robots tags for finer control of indexation. For deeper rules, see robots.txt best practices industrial sites.

What Role Do XML Sitemaps Play in Manufacturing SEO?

XML sitemaps in manufacturing SEO publish a clean, machine-readable list of canonical URLs, lastmod timestamps, and optional priority hints to accelerate discovery and re-crawl. Sitemaps are particularly valuable for catalogs because new SKUs, certifications, and capability pages may not be linked from primary navigation. Split sitemaps by content type: products, processes, materials, locations, and editorial. Compress with gzip, reference each from a sitemap index, and submit through Search Console and Bing Webmaster Tools. Update lastmod only when content meaningfully changes; spurious updates erode the signal's value.

How Do Canonical Tags Prevent Duplicate Content on Product Pages?

Canonical tags prevent duplicate content on product pages by declaring which URL is the preferred indexable version when multiple URLs serve substantively equivalent content. According to IETF RFC 6596, the target canonical IRI must identify content that is either duplicative or a superset of the content at the context referring IRI, and applications such as search engines may index content only from the target IRI. For manufacturing, this resolves the common case where the same alloy grade appears under multiple categories or filter combinations. Self-referential canonicals on every product page reduce ambiguity. Avoid canonicalizing variants to a category page; consolidate to the most specific equivalent. The next section turns from indexing controls to architecture decisions that shape topical authority.

What Are the Site Architecture Best Practices for Manufacturing Catalogs?

The site architecture best practices for manufacturing catalogs are predictable URL hierarchies, cross-linked process and material clusters, shallow click depth to revenue pages, and conservative faceted navigation. Architecture decisions taken once at the framework level compound across thousands of pages, which is why the approach mirrors patterns covered in site architecture best practices for large manufacturing catalogs.

How Should Engineers Structure URL Hierarchies for Process and Material Pages?

Engineers should structure URL hierarchies for process and material pages by using a stable, lowercase, hyphenated path that reflects the procurement query. Recommended patterns: /capabilities/{process}/, /materials/{material}/, /capabilities/{process}/{material}/, and /industries/{industry}/. Avoid encoding tracking parameters in the canonical path. Schema entities support disambiguation at the data layer, and according to Schema.org, sameAs is the URL of a reference Web page that unambiguously indicates the item's identity. Pair URL hierarchy with consistent breadcrumbs, internal links, and BreadcrumbList structured data so that the path on disk matches the path in the index.

What Is the Recommended Internal Linking Pattern for Topical Authority?

The recommended internal linking pattern for topical authority is the hub-and-spoke pattern: a pillar page for each major capability or process links down to specific material, tolerance, and industry sub-pages, and each sub-page links back to the pillar plus laterally to siblings. This concentrates link equity on the pillar, distributes context to leaves, and helps crawlers infer topical clusters. Use descriptive anchor text that mirrors the target query. Avoid orphan pages; every published URL should be reachable from at least two other internal pages. Audit internal links quarterly with a crawler.

How Deep Should a Product or Process Page Sit in the Site Tree?

A product or process page should sit no deeper than three clicks from the homepage when measured by shortest path. Click depth correlates with crawl frequency and perceived importance. According to web.dev, each Core Web Vitals metric uses the 75th percentile value of all page views to a page or site to classify performance, and if at least 75% of page views meet the good threshold, the site is classified as having good performance for that metric. Pages buried under five or six clicks rarely accumulate enough sessions to register meaningful performance data. Engineers should flatten taxonomy and surface deep leaves through hub pages, sitemaps, and contextual links inside guides.

How Should Faceted Navigation Be Handled to Avoid Index Bloat?

Faceted navigation should be handled to avoid index bloat by limiting which filter combinations are crawlable, canonicalizing variants to the parent category, and choosing parameter syntax carefully. According to Google Crawling Infrastructure Documentation, engineers should use the industry standard URL parameter separator '&', because characters like comma, semicolon, and brackets are hard for crawlers to detect as parameter separators in faceted navigation URLs. Combine three tactics: rel="canonical" pointing low-value combinations to the canonical category, robots.txt blocks for parameter-only URLs that produce thin pages, and noindex on filter URLs that must remain crawlable for users. Architecture choices made here directly fund the page experience work covered next.

How Should Engineers Optimize Page Experience and Core Web Vitals?

Engineers should optimize page experience and Core Web Vitals by setting performance budgets at the framework layer, instrumenting field metrics from real users, and treating LCP, INP, CLS, and TTFB as engineering KPIs. Sub-sections define each metric, map them to ranking, and walk through image, server, and mobile optimizations.

What Are Largest Contentful Paint, Interaction to Next Paint, and Cumulative Layout Shift?

Largest Contentful Paint, Interaction to Next Paint, and Cumulative Layout Shift are the three Core Web Vitals that quantify load speed, responsiveness, and visual stability respectively. According to web.dev, LCP should occur within 2.5 seconds of when the page first starts loading, pages should have an INP of 200 milliseconds or less, and pages should maintain a CLS of 0.1 or less, all measured at the 75th percentile of page loads across mobile and desktop. LCP measures when the largest viewport element renders. INP measures the latency of every user interaction across the page lifetime. CLS measures unexpected movement of visible content during load.

How Do Core Web Vitals Affect Search Rankings on Industrial Sites?

Core Web Vitals affect search rankings on industrial sites as part of Google's page experience signals. They are not standalone ranking factors that override content quality, but they act as tiebreakers among pages of similar relevance and authority. Slow LCP on a long-tail capability page can suppress rankings against a faster competitor with equivalent content. Industrial sites often carry heavy CAD images, video demos, and feature comparison tables, so vitals work tends to focus on render-blocking resources, font loading, and lazy-loading below-the-fold media.

What Image and CAD Asset Optimizations Improve Page Speed?

The image and CAD asset optimizations that improve page speed are modern format conversion, responsive sizing, lazy loading, and dimension attributes. There are several practical tactics: convert JPG/PNG hero shots to AVIF or WebP, serve responsive sizes with srcset, defer below-the-fold media with loading="lazy", reserve space with width and height attributes to prevent CLS, and host CAD previews as raster snapshots with a separate downloadable STEP file. CDN-level image optimization handles format negotiation per browser. Replace decorative animation with static SVG. Audit the largest 20 images on each template before shipping.

How Can Engineers Improve Time to First Byte on Catalog Pages?

Engineers can improve Time to First Byte on catalog pages by tuning origin server response time, caching at the edge, and reducing database round-trips. According to web.dev, good TTFB values are 0.8 seconds or less, and poor values are greater than 1.8 seconds. Practical levers: enable HTTP/2 or HTTP/3, place a CDN in front of the origin, pre-render product templates at build time when stock data is static, cache database queries for category and faceted pages, and right-size the application server. TTFB precedes every other loading metric, so improvements here propagate to LCP and INP.

Why Does Mobile Usability Matter for Procurement-Intent Searches?

Mobile usability matters for procurement-intent searches because Google now indexes the smartphone-rendered version of a site, and procurement researchers increasingly read product pages from phones during plant walks, supplier visits, and conferences. Tap targets must clear minimum size thresholds, forms must work without zooming, and quote request CTAs must remain in the viewport. Mobile usability defects compound with vitals defects: a slow mobile page that also requires pinch-to-zoom to read tolerances loses both the user and the ranking. The following section moves from page experience to the structured data that makes manufacturing entities legible.

How Should Engineers Implement Structured Data and Schema for Manufacturing?

Engineers should implement structured data and schema for manufacturing by serializing entities as JSON-LD, mapping each page template to the most specific Schema.org type, and validating output against authoritative testers. Sub-sections cover the schema types that apply, how to combine them, FAQ and breadcrumb usage, sameAs grounding, and validation tooling.

What Schema Types Apply to Products, Services, and Processes?

The schema types that apply to products, services, and processes are Product, Service, and (by extension) DefinedTerm or HowTo for process explanations. According to Schema.org, the Product type represents any offered product or service, such as a pair of shoes, a concert ticket, the rental of a car, a haircut, or an episode of a TV show streamed online, and the schema integrates with GoodRelations terminology for sharing e-commerce data. For manufacturing, Product fits SKU-level and material-grade pages, Service fits capability pages such as CNC milling or injection molding, and Organization wraps the corporate identity. Choose the most specific type the page actually represents.

How Should Organization, LocalBusiness, and Product Schema Be Combined?

Organization, LocalBusiness, and Product schema should be combined as a connected JSON-LD graph using @id references, not as duplicated literal blocks. According to Schema.org, Organization schema represents an organization such as a school, NGO, corporation, club, etc., and supports properties for entity disambiguation including sameAs URLs to Wikipedia, Wikidata, and official social profiles. The pattern: emit one Organization (or LocalBusiness for facility pages) at the site level with @id="https://example.com/#org", then have each Product, Service, and Article reference brand: { "@id": "https://example.com/#org" }. This eliminates redundancy and makes the graph crawlable as one entity network.

How Do FAQ, HowTo, and Breadcrumb Schemas Support Technical Content?

FAQ, HowTo, and Breadcrumb schemas support technical content by structuring question-answer pairs, ordered process steps, and hierarchical site paths so that search engines can extract them for richer presentations. Manufacturing content uses BreadcrumbList on every catalog page, FAQ on capability and material pages where common procurement questions exist, and HowTo for step-based process explanations. Note that Google has narrowed eligibility for some rich result types over time, so the SEO benefit may be entity comprehension rather than rich snippet display. Implement them anyway; structured data still feeds the Knowledge Graph.

How Should sameAs Properties Be Used for Entity Grounding?

The sameAs property should be used for entity grounding by listing authoritative external URLs that identify the same real-world entity. Add sameAs links to Wikipedia, Wikidata, official LinkedIn, Crunchbase, the company's Bloomberg profile, and any government registry entry on the Organization node. According to HTTP Archive Web Almanac, overall structured data usage reached 49% of mobile home pages and 48% of desktop home pages, with RDFa present on 66% of pages, JSON-LD on 41%, and Microdata on 26%. JSON-LD is the format Google recommends for organization-level sameAs grounding.

How Can Engineers Validate Schema with Rich Results Test and Schema.org Validator?

Engineers can validate schema with the Rich Results Test and the Schema.org Validator by submitting the rendered URL or pasting the JSON-LD block and reading the parser output. According to Google Search Central, FAQ rich results are only available for well-known, authoritative websites that are government-focused or health-focused after the eligibility narrowing. Use the Rich Results Test for any feature Google explicitly supports (Product, BreadcrumbList, Organization). Use the Schema.org Validator for general syntactic correctness on types Google does not display as rich results. Add both checks to CI on a sampled URL set so schema regressions break the build before deployment.

What Are the Rendering, JavaScript, and Hosting Choices That Affect SEO?

The rendering, JavaScript, and hosting choices that affect SEO are how HTML is generated and delivered, how JavaScript executes for crawlers, which rendering mode the framework defaults to, and how hosting decisions shape latency and uptime. Sub-sections compare modes and weigh tradeoffs.

How Does Server-Side Rendering Compare to Client-Side Rendering for Catalogs?

Server-side rendering compares to client-side rendering for catalogs by delivering fully formed HTML on the first response, while client-side rendering delivers a JavaScript shell that hydrates content after execution. According to MDN-style consensus and the Wikipedia entry on hydration, server-side rendering makes HTML available to the client before JavaScript loads, and hydration is a technique in which client-side JavaScript converts a web page that is static from the perspective of the web browser into a dynamic web page by attaching event handlers to the HTML elements in the DOM. For catalogs with thousands of indexable URLs, SSR or static rendering is the safer default because it removes a render queue dependency for crawlers.

What Are the Indexing Risks of JavaScript-Heavy Frameworks?

The indexing risks of JavaScript-heavy frameworks are delayed rendering, partial content discovery, and broken hydration that hides text from crawlers. Googlebot defers JavaScript execution to a separate render queue, which can lag the initial crawl by minutes or longer. If product specifications, prices, or canonical tags only appear after client-side execution, they may miss the indexing window entirely. Risks compound on dynamic routes generated at runtime, on lazy-loaded route chunks, and on personalization layers that vary content per session. Engineers should ensure all SEO-critical content lives in the initial HTML response.

How Should Engineers Choose Between Static, Hybrid, and Dynamic Rendering?

Engineers should choose between static, hybrid, and dynamic rendering by matching the rendering mode to content volatility and personalization needs. According to Google Search Central, dynamic rendering was a workaround and not a long-term solution for problems with JavaScript-generated content in search engines, and instead Google recommends server-side rendering, static rendering, or hydration as a solution. Static rendering fits stable capability and material pages built once at deploy time. Hybrid (incremental static regeneration) fits product pages that change weekly. SSR fits inventory dashboards and account areas. Avoid client-only rendering for any indexable URL.

What Hosting Decisions Affect Crawl Speed and Uptime for Industrial Sites?

The hosting decisions that affect crawl speed and uptime for industrial sites are origin server location, CDN coverage, autoscaling configuration, and HTTP protocol support. Place origin servers near the primary buyer geography, deploy a CDN with edge nodes in target markets, configure autoscaling so traffic spikes (or crawl bursts) do not return 5xx errors, enable HTTP/2 or HTTP/3 for multiplexed requests, and monitor uptime through synthetic checks. Crawl rate adapts down when servers slow or fail, so hosting reliability directly governs how quickly new pages enter the index. The next section turns to the audit and monitoring layer that confirms these decisions are working.

How Should Engineers Audit, Monitor, and Report on Technical SEO Health?

Engineers should audit, monitor, and report on technical SEO health by combining crawl simulators, log file analysis, real-user monitoring, and stakeholder dashboards into a repeatable cadence. Sub-sections cover the audit toolset, log workflows, dashboard composition, and stakeholder communication.

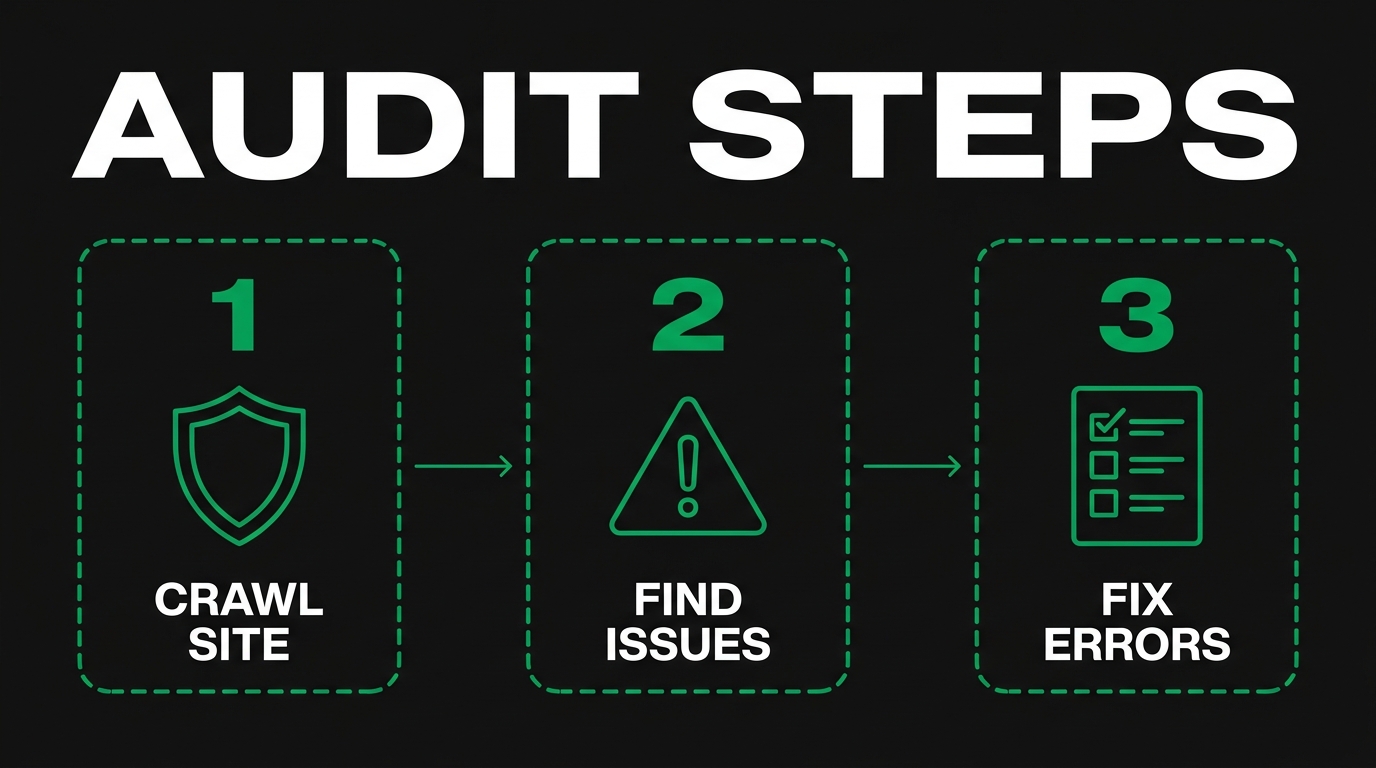

What Tools Should Engineers Use for a Technical SEO Audit?

The tools engineers should use for a technical SEO audit are a desktop crawler (Screaming Frog, Sitebulb, or Lumar), Google Search Console, Bing Webmaster Tools, the Rich Results Test, the Schema.org Validator, the URL Inspection API, and a real-user monitoring source such as the Chrome User Experience Report (CrUX) for field vitals. Run a baseline crawl monthly, validate sitemaps quarterly, and run rich-results tests on a sampled URL set in CI. Pair scheduled audits with continuous monitoring so regressions surface within hours, not at the next quarterly review. Reviewing peer outcomes helps too; see case studies: technical seo for heavy industry.

How Should Log File Analysis Inform Crawl Optimization?

Log file analysis should inform crawl optimization by revealing exactly which URLs Googlebot fetched, how often, and with what response code, so engineers can spot wasted crawl on parameter URLs, redirect chains, or 5xx errors. According to Digital.gov, an XML sitemap is a file that lists URLs for a site, along with additional metadata about each URL (when it was last updated, how often it changes, and how important it is relative to other URLs in the site) so search engines can crawl the site more intelligently. Cross-reference sitemap URLs against log hits: any sitemap URL that is never crawled is a discovery problem; any non-sitemap URL crawled heavily is a budget leak.

What Should Be in a Technical SEO Monitoring Dashboard?

A technical SEO monitoring dashboard should contain index coverage by template, Core Web Vitals at the 75th percentile, sitemap submission status, robots.txt fetch status, structured data error counts, server response code distribution, average TTFB, mobile usability errors, and crawl rate from log files. Include thresholds that trigger alerts: indexed page count drops above 5%, vitals regressions above 10%, or new 5xx error rates above baseline. Version-control the dashboard definition so configuration drift is reviewable. Stakeholders should see one dashboard per audience: engineering, marketing, and executive.

How Should Engineers Communicate Technical SEO Wins to Procurement and Marketing Stakeholders?

Engineers should communicate technical SEO wins to procurement and marketing stakeholders by translating metrics into pipeline language: indexed capability pages, qualified organic sessions, RFQ submissions, and revenue-attributed organic conversions. Replace LCP milliseconds with "page X now loads twice as fast and converts at Y% higher RFQ rate." Pair every technical metric with a business metric. Use monthly readouts that show before-and-after, name the engineering changes, and credit the team. The bridge to commercial outcomes earns sustained engineering investment. The next section explains how a specialized partner can help engineering teams ship these best practices.

How Should Engineers Approach Technical SEO With Manufacturing SEO Agency?

Engineers should approach technical SEO with Manufacturing SEO Agency by treating the agency as the strategic and remediation partner that translates engineering work into procurement-intent rankings, RFQ pipeline, and closed revenue. Manufacturing SEO Agency focuses exclusively on B2B manufacturers, so the recommendations align with how procurement actually buys. Sub-sections cover the remediation service and the article's takeaways, including links to broader manufacturer guidance such as heavy industry seo best practices, basic seo strategies for manufacturers, and contract manufacturing vs custom manufacturing seo.

Can Manufacturing SEO Agency's Technical SEO Remediation Service Help Engineers Implement These Best Practices?

Yes, Manufacturing SEO Agency's manufacturing technical seo audit and remediation service can help engineers implement these best practices by running a full technical crawl, mapping crawl waste, validating schema, tuning page experience, and pairing every fix with the procurement-intent keyword and content architecture that earns rankings. Manufacturing SEO Agency offers manufacturing audit and competitive intelligence, procurement-intent keyword architecture, topical authority buildout, and technical SEO remediation as connected workstreams. Engineers ship the code; Manufacturing SEO Agency provides the priority list, the schema patterns, and the revenue-tied reporting that proves the work is moving pipeline. The engagement model fits in-house engineering teams that need a specialist partner for the SEO layer.

What Are the Key Takeaways About Technical SEO for Engineers We Covered?

The key takeaways about technical SEO for engineers we covered are these: technical SEO is an engineering responsibility because every control surface lives in the codebase; crawl budget and indexation depend on robots.txt, sitemaps, and canonical hygiene; site architecture and faceted navigation decide which pages compound topical authority; Core Web Vitals and TTFB are engineering KPIs measured at the 75th percentile; structured data with sameAs grounds the manufacturing entity in the Knowledge Graph; server-side or static rendering remains the safest default for indexable catalog pages; and audit, log analysis, and stakeholder dashboards close the loop between engineering changes and procurement outcomes. Manufacturing SEO Agency partners with engineering teams to apply this stack to the procurement queries that fund the business.